The Fourth Mission

In this installment of SQLCoOp, we are sharing our views on something we’ve learned this month. While I learned quite a bit this month in order to create a new SQLite Database with test data that mapped back to my SQL Server database, I will limit this post to how my first PowerShell script aided me in creating a new SQLite Database from Embarcadero’s ER/Studio Data Architect.

The Project

I’ve been working on a project recently where we have a SQL Server backend on our server and SQLite databases on our client machines. The two databases have quite a few tables in common, but the two database platforms don’t share the same toolsets. Here are the hurdles I had to overcome.

- The SQL language that SQLite uses is similar to T-SQL, but not the same. This means scripting tables, constraints, and other SQL objects are slightly different.

- I use Embarcadero’s ER/Studio Data Architect (DA) to do all of my data modeling, but there isn’t a driver that will work with DA to compare my database model to a SQLite database so that I can keep them in sync.

- I needed to create test data in both databases, but I don’t have an environment where the databases can coexist so that I can write the proper insert scripts.

In this post, I’ll focus on the first two hurdles. The third hurdle was solved using Red Gate’s SQL DataGenerator, Python, and SSIS. I will save this story for another time since it is worthy of a blog post all on its own.

Hurdle 1: Understanding SQLite

The first thing I had to learn was the ins and outs of SQLite. I acquired a great book, Using SQLite and started reading. I also searched the depths of the internet. What I found was that SQLite likes to store almost everything as integer, numeric, and text, BUT there are data types that we are familiar with like varchar, datetime, etc. They just get “interpreted” as integers, numeric, and text depending on what the data types are. This is a good reference for understanding how the more familiar data types are actually stored in the SQLite database.

When I was first learning about SQLite, I mistook the storage classes as actual data types. I thought SQLite was going to use a type of implicit conversion, but SQLite is not the same as SQL Server. There is also a big benefit to using the more familiar data types instead of sticking to the storage classes that SQLite uses. The benefit has to do with the ADO.NET class and making life easier for your application developer who has to take data from SQL Server and get it into SQLite.

NOTE: You REALLY need to understand how dates work in SQLite. Depending on how the date and time are stored in the underlying database (string, integer, numeric) will determine what “zero” is equivalent to. Read this to find out more.

Hurdle 2: Creating SQL Scripts for Schema Changes From ONE Environment

There are several software packages out in the world that will allow you to get visual data models of SQLite tables, but I already use Embarcadero’s ER/Studio Data Architect (DA) and I didn’t want to learn another one. I also didn’t want to have my SQL Server data models in one software package and my SQLite data models in a different one. So I had to figure out how to get around this hurdle…and I used PowerShell to do it.

The first thing I did, was to create my data models. My first SQLite data model was based on my initial research which implied I should store all my data in integer and text data types since that is what the engine will be using to store the data. This was a poor assumption on my part. Since I had both my SQL Server and my SQLite database models in DA, I was able to easily update my data types in my SQLite database with their SQL Server counter parts. I did have to inspect each field manually since not all attributes were the same between the two databases. For instance, I have to use CURRENT_TIMESTAMP instead of getdate() and I had to model auto incrementing columns differently.

Note: This is a sample database

SQL Server database

First iteration of my SQLite database

NOTE: I was able to use the Compare and Merge Utility by selecting the option to compare against another model.

Second iteration of my SQLite database

Making a SQLite Script from ER/Studio Data Architect

Before we go down this rabbit hole, there is another piece of information that you need to know about SQLite. You can’t add foreign key constraints after the table is created. This is very frustrating for me since I know of some existing tables that need foreign key constraints and it will be a bigger task than it would be in SQL Server to get foreign key constraints applied. For this project, it means I need to script the foreign keys as well as the primary keys in the table creation script.

There are two operations that need to be done in order to generate a SQLite script from DA. The first is to use the Generate Database Wizard to generate a whole database instead of using the Compare and Merge Utility to compare the database model with the actual database. This operation is outlined below in steps 1 though 6. The second operation is to use a simple PowerShell script to make the necessary changes to the script so that it will no longer be a T-SQL script made to run in SQL Server, but a SQL script ready to run in SQLite. This is outlined in step 7 below.

Here are the steps you’ll need to do:

Step 1

Make sure that all auto incrementing fields are not set up as primary keys in the database model. The reason for this, is all auto incrementing fields are primary keys, so the syntax for creating an auto incrementing field is very specific.

Take a look at the EmployeeAward table in DM SQLite above. It is setup as an identity column, but not as a primary key. In order for me to have a visual cue that the field is a primary key, I used an object called a Domain. Domains are templates that can be used throughout the database model so that data types, defaults, and even definitions remain consistent and can easily be changed throughout an entire database model or across all database models if the Repository feature of DA is being used.

Step 2

Right click the physical model that you will be working with, and select Generate Database. You will be walked through a wizard. At the end you are going to want to save your settings so that you don’t have to select them again.

Step 3

On Page 1 of the wizard, you can create individual scripts or a single long script. I’ve been doing both so that I can select a single table later on if I need to regenerate it. You will also need to select the folder that you would like the scripts to be created in. I created 3 folders for my project.

- MultiScripts (This will hold scripts for each object separately)

- SingleScript (This will hold a single script that contains all the objects.)

- Standardize (This holds the PowerShell script called StandardizeSQL.ps1 which will be explained in step 7.)

Step 3

I don’t do anything on page 2 of the wizard since this script will not run in SQL Server.

Step 4

Page 3 of the wizard is where the fun starts. Set the following options for the tables you want to include in the script. These options are found on the General Options tab. All the other tabs don’t need to have their options modified…unless you used features like sparse columns that can’t be used in SQLite. Then you might need to fiddle with some of the other settings.

Step 5

Save your settings on page 4 of the wizard so that you can quickly run through the wizard next time.

Step 6

If you are asked to open your newly created script, then say no. We’re not done yet.

Step 7

Run the following PowerShell script. You can do this by right clicking the file containing the PowerShell script and selecting Run with PowerShell. Note: The ability to run PowerShell scripts needs to be enabled first.

The script will go through all the *.sql files in the indicated folder and change the T-SQL specific syntax to the SQLite syntax. Here are the syntactical changes.

- Int will be changed to integer.

- Identity columns will be changed to primary key autoincrement columns.

- The words nonclustered and clustered will be removed from primary and foreign key constraints since all indexes are nonclustered and those keywords are not supported in SQLite.

Before you run the script below, you’ll need to change the path to your scripts on line 8.

Note: there is a space before the asterisk on line 8 and there are spaces on either side of “int” on line 12.

# To Run this in the Powershell it would be written as ./StandardizeSQL.ps1

# Note: nonclustered needs to come before clustered since clustered is in the word nonclustered.

$wshell = New-Object -ComObject Wscript.Shell -ErrorAction Stop

Try

{

$configFiles=get-childitem -Recurse C:\SQLiteScripts *.sql

foreach ($file in $configFiles)

{

(Get-Content $file.PSPath) |

Foreach-Object {$_ -replace " int ", " integer "} |

Foreach-Object {$_ -replace "IDENTITY\(1,1\)", "PRIMARY KEY AUTOINCREMENT"} |

Foreach-Object {$_ -replace "nonclustered", ""} |

Foreach-Object {$_ -replace "clustered", ""} |

Set-Content $file.PSPath

}

$wshell.Popup("Success! And there was much rejoicing!", 0,"Done.",64)

}

Catch

{

$wshell.Popup($_.Exception.Message,0, "Oops! An error occurred.",48)

Break

}

After you are done running the PowerShell script, you’ll have a SQLite script that you can run in your favorite SQLite querying tool.

Here is the before and after scripts for two of the tables from my example.

CREATE TABLE Employee(

EmployeeID int NOT NULL,

FirstName varchar(50) NOT NULL,

LastName varchar(50) NOT NULL,

isActive bit DEFAULT 0 NOT NULL,

InsertedDTS datetime CONSTRAINT [DF_Employee_InsertedDTS] DEFAULT CURRENT_TIMESTAMP NOT NULL,

ModifiedDTS datetime NULL,

CONSTRAINT PK_Employee PRIMARY KEY CLUSTERED (EmployeeID)

)

;

CREATE TABLE EmployeeAward(

EmployeeAwardID int IDENTITY(1,1),

EmployeeID int NOT NULL,

AwardName varchar(100) NOT NULL,

AwardDate datetime NULL,

CONSTRAINT FK_EmployeeAward_Employee FOREIGN KEY (EmployeeID)

REFERENCES Employee(EmployeeID)

)

;

Before

CREATE TABLE Employee(

EmployeeID integer NOT NULL,

FirstName varchar(50) NOT NULL,

LastName varchar(50) NOT NULL,

isActive bit DEFAULT 0 NOT NULL,

InsertedDTS datetime CONSTRAINT [DF_Employee_InsertedDTS] DEFAULT CURRENT_TIMESTAMP NOT NULL,

ModifiedDTS datetime NULL,

CONSTRAINT PK_Employee PRIMARY KEY (EmployeeID)

)

;

CREATE TABLE EmployeeAward(

EmployeeAwardID integer PRIMARY KEY AUTOINCREMENT,

EmployeeID integer NOT NULL,

AwardName varchar(100) NOT NULL,

AwardDate datetime NULL,

CONSTRAINT FK_EmployeeAward_Employee FOREIGN KEY (EmployeeID)

REFERENCES Employee(EmployeeID)

)

;

After

Don’t Stop Yet

If you want to read more about the newly released SQL Search, don’t forget to check out these blog posts:

To follow our quest for SQL knowledge through this collaborative project, follow the #SQLCoOp tag on Twitter.

See you next time!!

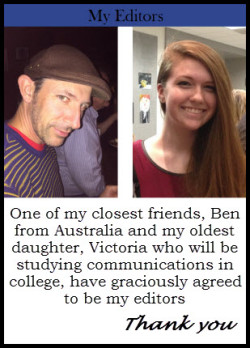

On a SQL Collaboration Quest

Four SQL professionals gathered from the four corners of the world to share their SQL knowledge with each other and with their readers: Mickey Stuewe from California, USA, Chris Yates from Kentucky, USA, Julie Koesmarno from Canberra, Australia, and Jeffrey Verheul from Rotterdam, The Netherlands. They invite you to join them on their quest as they ask each other questions and seek out the answers in this collaborative blog series. Along the way, they will also include other SQL professionals to join in the collaboration.

Original Post: On a SQL Collaboration Quest

Like this:

Like Loading...